The status quo is broken. Specifically, resumes, unstructured interviews, and combining predictive measures using professional judgment doesn’t work well. Over one hundred years of research in the area of industrial and organizational psychology supports this thesis, so I’m going to skip the basics and dive into the good stuff.

The good stuff, in my opinion, is more toward the cutting edge of decision science. We know that human beings, on their own, are imperfect decision makers. We know that well over 100 documented cognitive biases have an effect on our ability to make optimal decisions. We also know that many decision tools have demonstrated the ability to improve the accuracy of hiring decisions.

Job simulations, cognitive assessments, structured interviews, well mapped personality assessments, situational judgment tests, biodata, and physical ability assessments are all potentially valuable for predicting job performance. Moreover, when we combine the results from multiple job-related assessments in a statistically optimal, or even reasonable, fashion, the overall prediction is far more accurate, on average, than predictions made by experts who rely strictly on their “guts” to make these decisions.

To re-phrase the last sentence, algorithms beat experts at predicting future job performance. This general finding has been tested hundreds of ways with different types of predictions and different types of experts. The results are powerful, they are conclusive, and they are massively underutilized in the real world. We aim to help change that by including statistically optimal scoring generated by carefully derived algorithms with all of our assessment results.

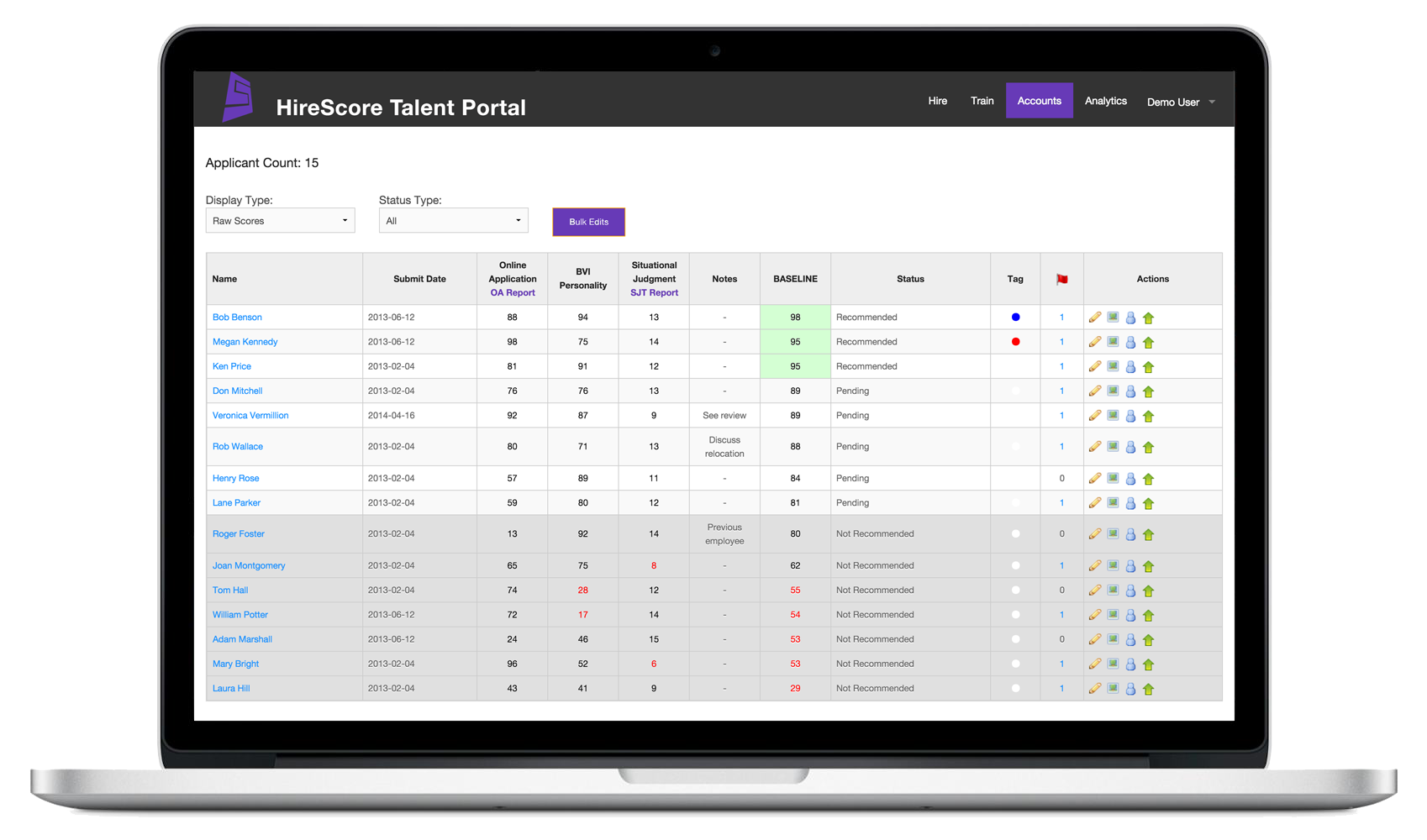

So, for example, if you have a candidate who has taken three assessments, we will provide you with four scores:

Works Hard = 9.1

Works Smart = 6.4

Works Safe = 8.5

Baseline = 8.4

The first three scores indicate the individual assessment results and the final score indicates the overall score, appropriately weighted, across the assessments. We call this weighted composite score a Baseline score.

The Baseline score is the single best indication of a candidate’s probability of success on the job and Baseline scores are directly comparable across candidates. So, in a situation where different candidates have different strengths, the Baseline score can be used to quickly and accurately rank order the candidates in terms of their probability of success on the job.

Think about employee selection decisions that you have observed. Most hiring decisions come down to a person or group of people trying to compare candidates in an apples and oranges fashion. Candidate Joe has the most appropriate college degree for the position, candidate Sue has better job experience, candidate Pat had the best energy level in the interview, and candidate Kyle scored highest on the math test.

Who should you pick? How much weight do you put on each of these factors and how do you combine them to look at the “whole person” and compare that person to the needs of the job? Does a college degree really matter for this job? Is job experience at one organization easily transferable to another? Does “energy level” in a 30-minute interview suggest high energy on a day to day basis? Does “high energy” really matter if the person isn’t smart enough to be trainable?

People who have been involved in hiring decisions readily see that the complexity of most decisions quickly goes beyond our abilities. Fortunately, the human brain has wonderful mechanisms for dealing with complexity. One of these mechanisms is the use of simplifying strategies commonly referred by decision making researchers to as “heuristics.”

Our brain knows that, on average, a loud noise is more important than a soft noise. Things that smell good are more likely to be edible than things that smell nasty. A restaurant with many cars in the parking lot is more likely to be a good option versus a restaurant with few cars. In all of these cases the rule of thumb has some merit, but it is also likely to lead us astray at times.

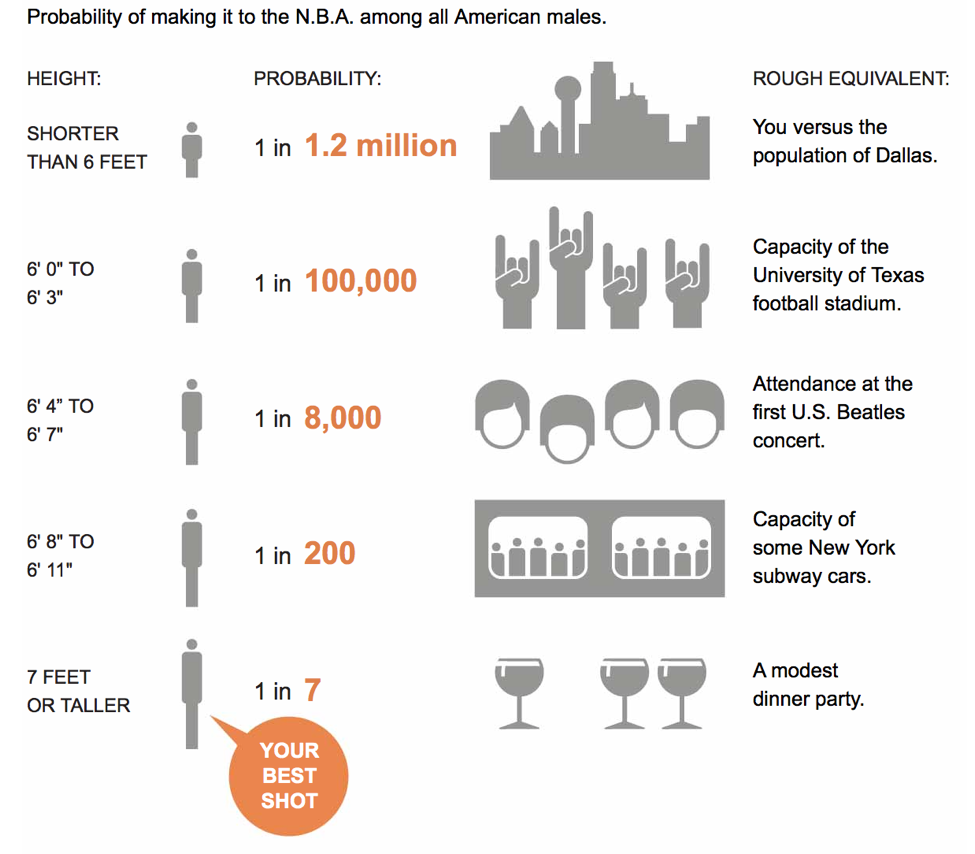

Imagine that you are in charge of picking players for an NBA basketball team. There are lots of players from all over the world to choose from so you decide that you aren’t going to look at anybody who’s height is under 6’. You know that there are some great players who are less than 6’ tall, but you also know that you don’t have time to evaluate every player and by cutting out all people under 6’ your pool of candidates seems much more manageable.

Cutting part of the pool allows you to focus your time on players with the highest probability of success at the expense of a tiny proportion of great players who are under 6’ tall. This same scenario applies for any type of minimum requirement when hiring. Requiring 5 years of work experience, a 3.0 GPA, or a college diploma will simplify your hiring decision . . . at the expense of precision. What if we could simplify our options without losing precision . . . wouldn’t that be a better option?

As decision makers we face a dilemma. We need lots of data to make an accurate decision and we need to simplify that data to make sense of it. Simplifying is smart, which is why our brain does it automatically using heuristics. Over simplifying, however, is not smart and leads to many preventable mistakes, which is why we need a better way to make important decisions.

Algorithms provide the better way. An algorithm can efficiently combine data from a wide variety of measures in a fashion that minimizes data loss. An algorithm can be used to quickly and accurately rank order 7000 candidates in seconds and the results are far more accurate than if a team of people scour the same information for weeks. Algorithms can also be tracked over time and updated with improved algorithms.

Oddly enough we can model the decision making of experts using policy capturing studies, and the resulting algorithms are more accurate than the people they were modeled after (crazy, but true). In fact, we have modelled the decisions of NFL teams and created algorithms that rank order NFL draft prospects. On average, these algorithms are 36% more accurate than the selections made by actual NFL teams. We’ll get into the details of why this is true in future blog posts, but the simple explanation is that algorithms are extremely consistent whereas humans, even expert humans, are not.

So, when you think about humans being beat by algorithms, how do you react? Anger . . . “they” can’t be better than us! Fear . . . what are people going to do if the algorithms start making all the decisions? Disbelief . . . what does this guy know, he’s just a guy writing a blog. Or amazement . . . imagine the great things people could do if they improved their decision making when making life changing decisions! Many years ago I was a bit irritated that computers were beating Chess champions and, much later, Jeopardy champions. Today I have come full circle and I am simply amazed that people can build machines, computers, and algorithms that make people better at doing things they love to do.

Just as a bulldozer amplifies the power of a person and allows her to move more dirt faster than a hundred people digging with their hands, algorithms allow us to make faster, more accurate decisions in a less biased manner. The initial fear and pushback against using algorithms to improve our decision making is both understandable and irrational.

Let’s get over it and start making better decisions.

Just discovered your blog, Spence. Good stuff! Hope you don’t mind if I share some of it with my classes.

Latest response ever. Thanks Gary, share away!